Companies are needing more and more data storage space: e-mail, social media, and other communication systems are accumulating ever larger amounts. On top of this demand come the sensors of the Internet of Things, continuously producing data.

No wonder the amount of data produced by humanity is growing exponentially. According to a study commissioned by hard drive manufacturer Seagate and conducted by the US market research institute IDC, this expanse will multiply to 175 zettabytes – that’s 175, followed by 21 zeros. If this mass of data were to be stored on conventional DVDs, the height of the stack would be 23 times the distance between the earth and the moon.

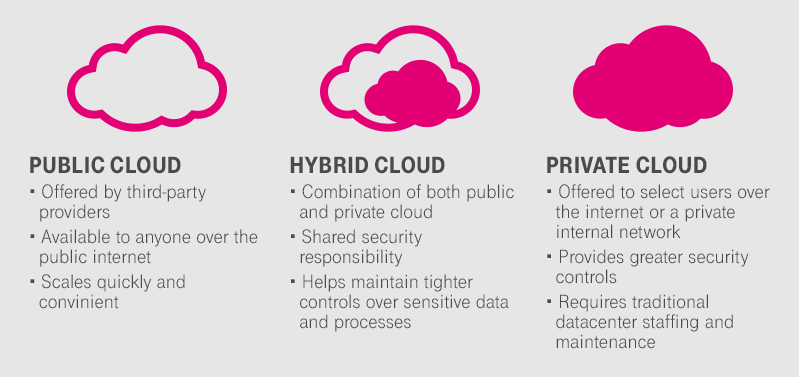

An exploding data universe, in which data becomes the raw material for future business, also requires flexibly available storage space. Thus, companies are faced with the challenge of finding large capacities at low cost. Cloud storage, as offered by the Open Telekom Cloud, makes it possible to cope with such huge mountains of data.